Lectures

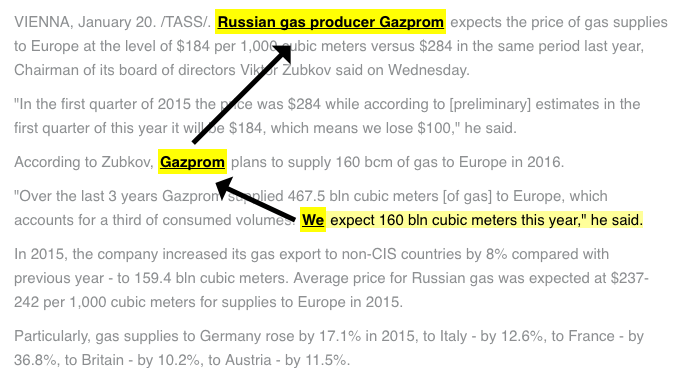

Language models and part-of-speech tagging

Ekaterina Shutova. 2018-10-30.

Abstract Slides Video Further reading

Ekaterina Shutova. 2018-10-30.

Abstract Slides Video Further reading

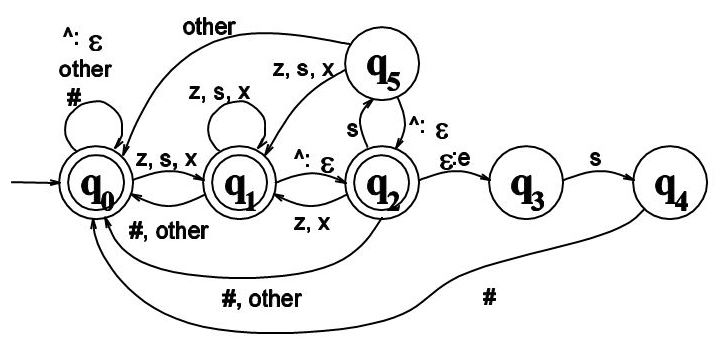

Modelling structure: morphology and syntax

Ekaterina Shutova. 2018-11-04.

Abstract Slides Video Further reading

Ekaterina Shutova. 2018-11-04.

Abstract Slides Video Further reading

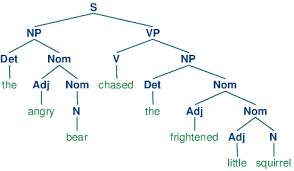

Syntactic parsing (finishing off). Lexical semantics

Ekaterina Shutova. 2018-11-06.

Abstract Slides Video Further reading

Ekaterina Shutova. 2018-11-06.

Abstract Slides Video Further reading

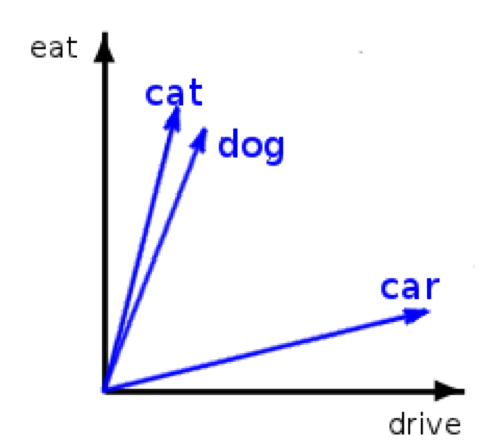

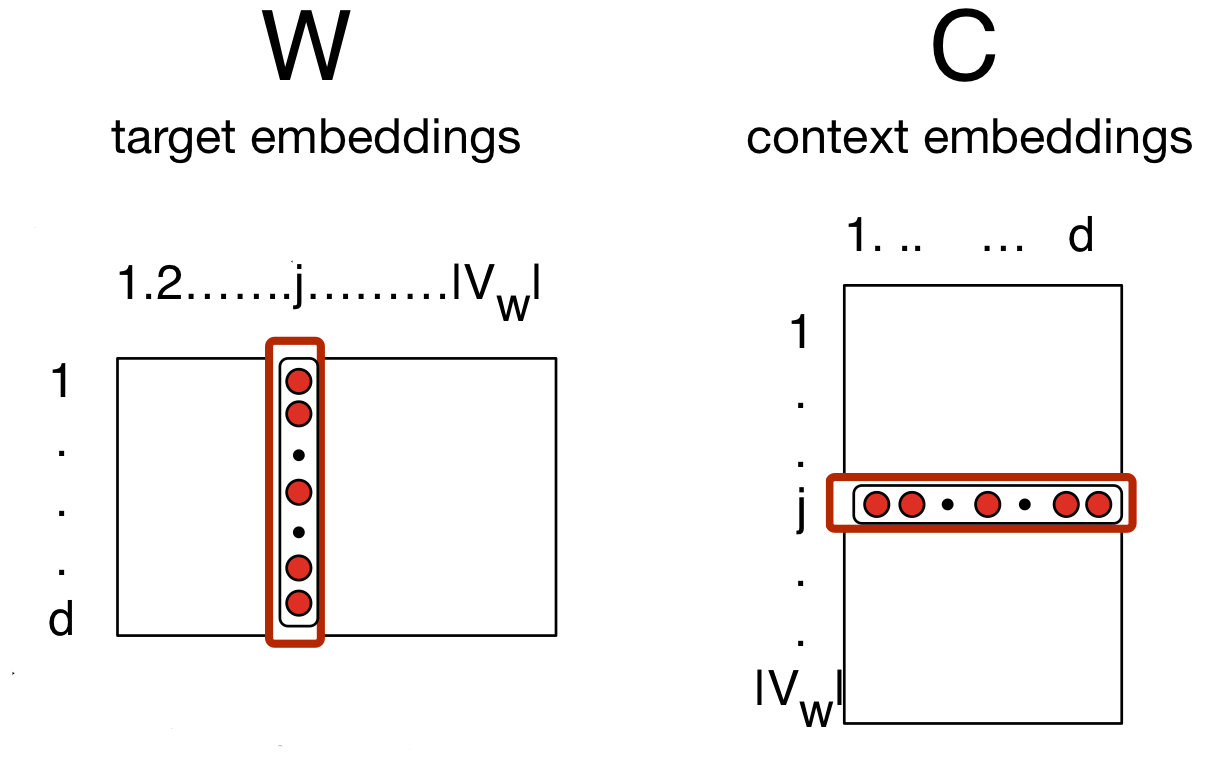

Generalisation and word embeddings

Ekaterina Shutova. 2018-11-13.

Abstract Slides Video Further reading

Ekaterina Shutova. 2018-11-13.

Abstract Slides Video Further reading

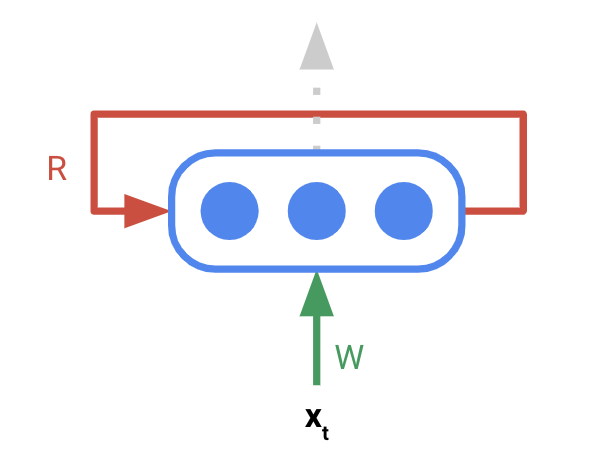

Compositional semantics and sentence representations

Ekaterina Shutova and Sandro Pezzelle. 2018-11-18.

Abstract Slides Video Further reading

Ekaterina Shutova and Sandro Pezzelle. 2018-11-18.

Abstract Slides Video Further reading

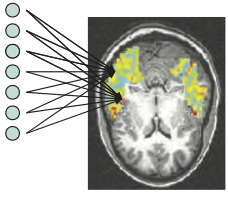

Two guest lectures: NLP and human language processing & Dialogue modelling

Jelle Zuidema and Raquel Fernandez. 2018-11-27.

Abstract Slides Video Further reading

Jelle Zuidema and Raquel Fernandez. 2018-11-27.

Abstract Slides Video Further reading

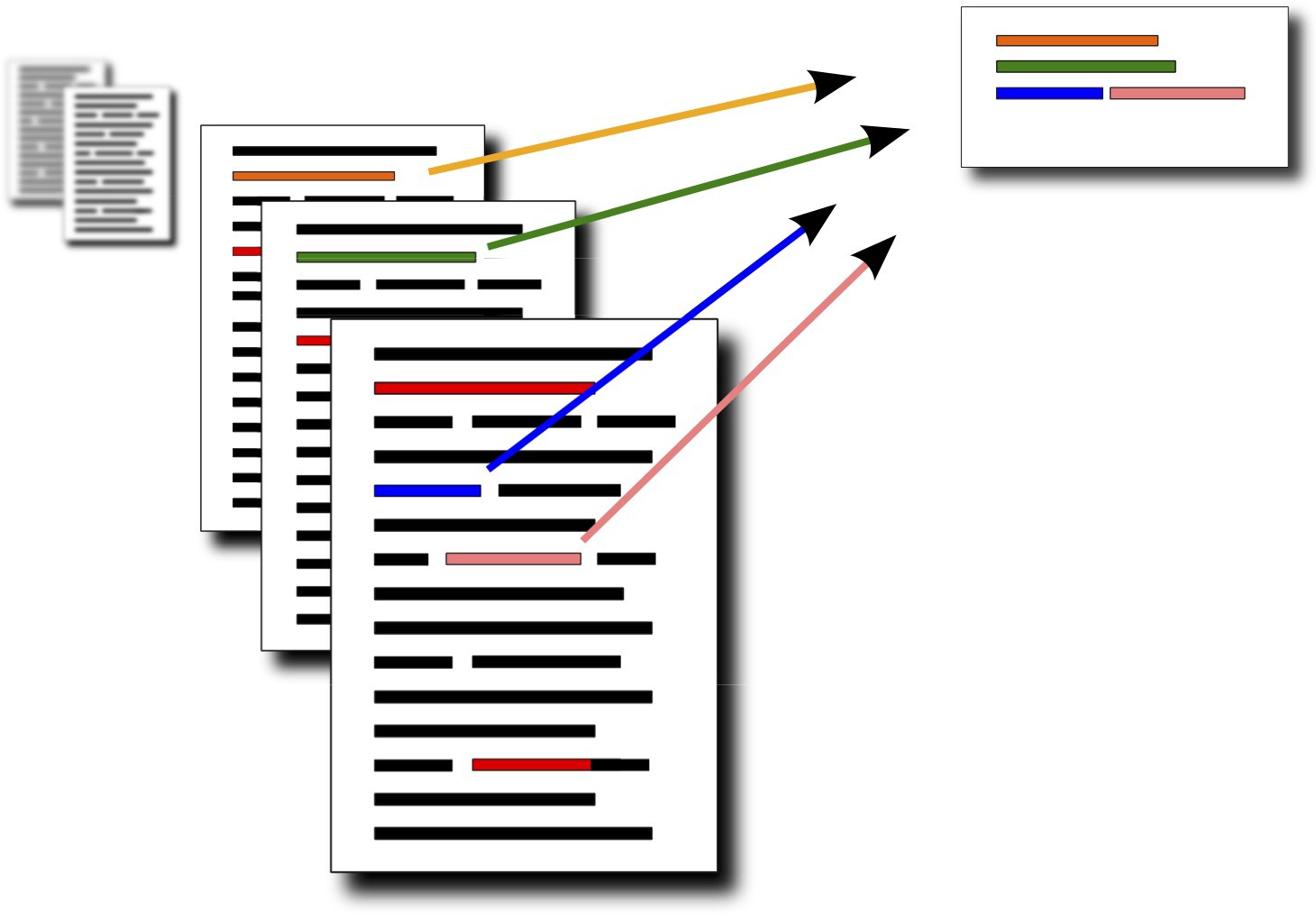

Language generation and summarisation

Ekaterina Shutova. 2018-12-02.

Abstract Slides Video Further reading

Ekaterina Shutova. 2018-12-02.

Abstract Slides Video Further reading

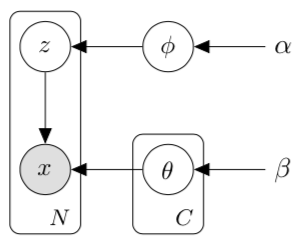

Foundations of Bayesian NLP

Guest lecture by Wilker Aziz. 2018-12-11.

Abstract Slides Video Further reading

Guest lecture by Wilker Aziz. 2018-12-11.

Abstract Slides Video Further reading